Tradeoffs in BFT: latency vs. robustness

Modern Byzantine Fault Tolerant (BFT) consensus protocols typically operate under the partial synchrony model. This model assumes that the network eventually stabilizes and message delays remain bounded. While practical for protocol design, real-world deployments rarely enjoy long periods of uninterrupted stability. Instead, systems frequently experience periods of synchrony followed by short disruptions such as latency spikes, node outages, or adversarial conditions. These transient disruptions are referred to as “blips”. Under such conditions, existing consensus protocols are forced to choose between low latency in stable network conditions and robustness in the presence of faults.- Traditional view-based BFT protocols, such as PBFT and HotStuff, are optimized for responsiveness during good intervals when the network is stable. However, they suffer from degraded performance when a blip occurs. This degradation, known as a hangover, can persist even after the network has recovered, as backlogged requests accumulate and delay subsequent transactions.

- DAG-based BFT protocols, such as Narwhal & Tusk/Bullshark, decouple data dissemination (DAG) from consensus (BFT) and propagate transactions asynchronously across replicas. This design enables high throughput and allows the system to continue making progress during network disruptions. However, these protocols tend to incur high latency even during good intervals due to the complexity of their asynchronous ordering mechanisms.

Autobahn architecture overview

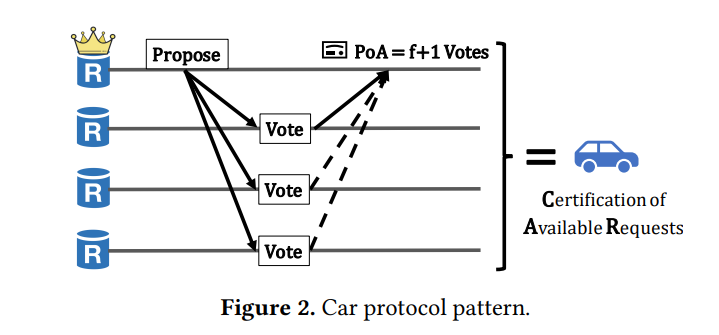

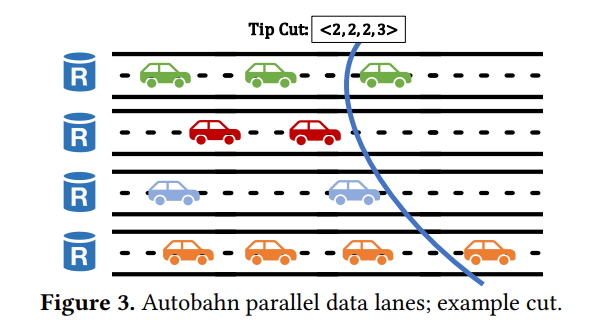

Autobahn is architected around a clear separation of responsibilities between its two core layers: a data dissemination layer and a consensus layer. This decoupling is inspired by the design of DAG-based systems like Narwhal, but Autobahn enhances this structure to support seamlessness and lower latency. The data dissemination layer is responsible for broadcasting client transactions in a scalable, asynchronous manner. It allows each replica to maintain its own lane of transaction batches, which can be propagated and certified independently of the consensus state. These lanes grow continuously, even when the consensus process stalls, ensuring that the system remains responsive to clients at all times. On top of this, Autobahn runs a partially synchronous consensus layer based on a PBFT-style protocol. However, instead of reaching agreement on individual batches of transactions, consensus is reached on “tip cuts,” which are compact summaries of the latest state of all data lanes. This design allows Autobahn to commit arbitrarily large amounts of data in a single step, minimizing the impact of blips. HotStuff tightly couples data and consensus, causing stalls when a leader fails. Bullshark incurs high commit latencies due to DAG traversal and data synchronization. Autobahn provides a smoother and faster consensus experience, inheriting the parallelism of DAGs while avoiding their latency pitfalls.Data dissemination layer: lanes and cars

Consensus layer: low-latency agreement

Key properties of Autobahn

Autobahn satisfies the standard safety and liveness guarantees expected from BFT protocols. Safety ensures that no two correct replicas commit different blocks for the same slot. Liveness guarantees progress after global stabilization time (GST) as long as a correct leader is eventually selected. More importantly, Autobahn achieves seamlessness. It avoids protocol-induced hangovers by allowing the consensus layer to commit arbitrarily large data backlogs in constant time. Even after a blip, as soon as synchrony returns, all data proposals that were successfully disseminated can be committed immediately. This enables Autobahn to operate smoothly in environments with intermittent faults, outperforming traditional BFT protocols in both recovery time and system responsiveness. In addition, the protocol scales horizontally. Each replica contributes to the system’s throughput via its own lane, and consensus cuts grow naturally with the number of participants. This makes Autobahn suitable for large-scale deployments requiring both high performance and robustness.Low latency meets high resilience

Autobahn was evaluated against leading BFT protocols, particularly Bullshark and HotStuff, under both ideal and fault-injected conditions. The results demonstrate that Autobahn achieves the best of both worlds: it matches Bullshark’s throughput, processing over 230,000 transactions per second, while reducing its latency by more than 50%. Under good network conditions, Autobahn commits transactions with just 3 to 6 message delays, compared to Bullshark’s 12. This translates to commit latencies as low as 280ms in practice, versus over 590ms for Bullshark. Unlike HotStuff, which suffers from long hangovers after blips due to backlog processing delays, Autobahn commits its entire backlog in a single step as soon as the network stabilizes. In scenarios involving leader failures or partial network partitions, Autobahn demonstrates seamless recovery. It continues disseminating data during faults and quickly commits accumulated proposals once consensus resumes. These performance advantages make Autobahn a compelling choice for blockchain platforms seeking to combine low-latency responsiveness with high throughput and fault tolerance.Further reading

For more technical deep-dives and details, refer to:Next recommended

Consensus

Return to StableBFT, the consensus implementation that Autobahn evolves.

Finality

Use Stable’s single-slot finality when building against the RPC.